worthy-azure

How to reset crawlee URL cache/add the same URL back to the requestQueue?

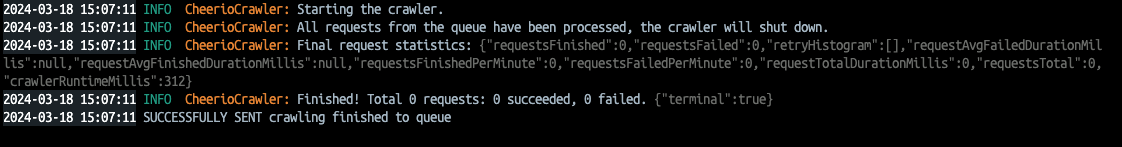

I have a CheerioCrawler that runs in a docker container that is listening to incoming messages(each message is a url) from RabbitMQ from the same Queue. The crawler runs and finishes the first crawling job successfully. However, if it receives the second message(url) which would be the same as the first one, it just outputs the message in the screenshot i've attached. Basically, it doesn't add the second message/url correctly to the crawler's queue. What would be a solution for this? I have thought about restarting the crawler or emptying the kv storage, but i can't seem to get it working. in my crawlerConfigs, i am setting 'purgeOnStart' and 'persistStorage' to false.

My code looks something like this:

const crawler = new CheerioCrawler({ async requestHandler ({$, request, enqueueLinks}) {

await enqueueLinks({

transformationFunction(req){

strategy: 'same-domain'

}

})

}

}, crawlerConfigs,)

await crawler.addRequests(websiteUrls);

await crawler.run();

My code looks something like this:

const crawler = new CheerioCrawler({ async requestHandler ({$, request, enqueueLinks}) {

await enqueueLinks({

transformationFunction(req){

strategy: 'same-domain'

}

})

}

}, crawlerConfigs,)

await crawler.addRequests(websiteUrls);

await crawler.run();